RAG & Agentic AI: First step to transition to AI Roles

Generative AI, RAG, LangChain, LangGraph and Multi Agent AI Systems explained

Experienced software professionals transitioning into AI Engineer or AI Architect roles often learn how to use Generative AI tools but struggle to explain how enterprise AI systems are actually designed.

Modern enterprise AI systems are not built using standalone LLM prompts.

They are built using:

- RAG Architecture

- Embeddings

- LangChain pipelines

- LangGraph workflows

- Multi Agent AI Systems

- Agentic AI Architectures like Supervisor and Planner–Executor

This article explains how Generative AI evolves into Agentic AI architecture in enterprise systems and how concepts like embeddings, RAG, LangChain, LangGraph and CrewAI work together to build autonomous AI workflow systems.

What is Generative AI in Enterprise Software?

Generative AI refers to systems that can create:

- Text

- Code

- Summaries

- Decisions

- Structured outputs

using Large Language Models (LLMs) trained on massive datasets.

Unlike traditional Machine Learning models that predict outcomes, Generative AI generates new content based on context understanding.

At its core:

Previous Tokens + Context

↓

Probability Distribution

↓

Next Most Likely Token

This probabilistic prediction leads to:

- Code generation

- Requirement summarization

- Document analysis

- Query generation

- API explanation

However, standalone LLMs cannot access enterprise data or execute workflows reliably — which leads to the need for RAG architecture.

Role of Machine Learning in GenAI Systems

Traditional Machine Learning still plays a crucial role in enterprise AI systems.

ML models are responsible for:

- Detection

- Classification

- Forecasting

- Recommendation

Example enterprise pipeline:

Drone Video

↓

Object Detection ML Model

↓

Detected Persons Count

↓

LLM

↓

Report / Explanation

ML extracts facts

LLM interprets facts

Agentic AI combines both into decision-making systems.

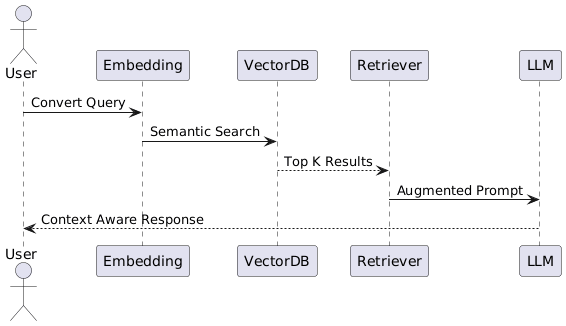

What is RAG Architecture and How it Works

RAG (Retrieval Augmented Generation) enables LLMs to respond using enterprise-specific knowledge instead of only pre-trained data.

Instead of:

User → LLM → Response

Enterprise RAG Flow:

User Query

↓

Embedding Model

↓

Vector Database Search

↓

Relevant Enterprise Documents

↓

Prompt Augmentation

↓

LLM Generates Grounded Response

RAG Architecture is used in:

- Enterprise knowledge bots

- ITSM automation

- Legal document search

- Policy compliance systems

How Embeddings Work in Vector Databases

Embeddings convert text into numerical vectors representing semantic meaning.

Example:

"Account balance is low"

→ [0.231, -0.445, 0.981 …]

Similar sentences like:

"Balance insufficient"

are stored nearby in multi-dimensional vector space.

This enables:

- Semantic search like cosine similarity

- Document similarity

- Context retrieval

- Recommendation

Embeddings are stored in:

- FAISS

- Pinecone

- Chroma

Used heavily in enterprise RAG pipelines.

LangChain – LLM Application Orchestration

LangChain helps developers build AI applications by:

- Prompt templating

- Tool integration

- Memory handling

- RAG pipelines

- API calling

Typical LangChain Pipeline:

User Input

↓

Prompt Template

↓

Retriever

↓

LLM

↓

Output Parser

↓

Structured Response

LangChain is suitable for:

- AI chatbots

- Document summarizers

- Resume analyzers

But managing complex workflows becomes difficult. Here is an example of a simple application using LangChain.

LangGraph – AI Workflow Orchestration Engine

LangGraph allows developers to design:

- Multi step reasoning

- Stateful agents

- Conditional execution

- Retry logic

- Tool selection

LangGraph Execution Model:

Planner Node

↓

Tool Executor

↓

Memory Update

↓

Reviewer Node

↓

Response Generator

LangChain vs LangGraph – When to Use What

| Requirement | Use |

|---|---|

| Simple AI chatbot | LangChain |

| RAG Knowledge Bot | LangChain |

| Multi Step Workflow | LangGraph |

| Agent Coordination | LangGraph |

| Long Running Tasks | LangGraph |

LangChain = LLM Service Layer

LangGraph = AI Workflow Engine

CrewAI and Multi Agent AI Systems

CrewAI enables multiple AI agents to collaborate as a team.

Each agent has:

- Role

- Goal

- Tools

- Memory

Example Multi Agent Travel Planner:

Research Agent → Hotel Search

Pricing Agent → Price Check

Review Agent → Sentiment Analysis

Planner Agent → Itinerary Creation

Used in:

- Enterprise automation

- Financial analysis

- Research workflows

Agentic AI Architectures Explained

Agentic AI systems perform:

- Planning

- Tool selection

- Execution

- Review

without human intervention.

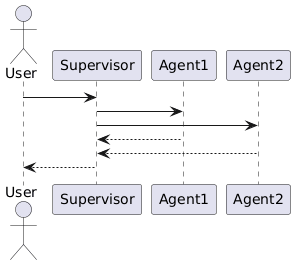

Supervisor Architecture

Central controller assigns tasks.

User Input

↓

Supervisor Agent

↓

Worker Agents

↓

Response

Planner – Executor Pattern

Planner decides steps

Executor performs actions

User Input

↓

Planner Agent

↓

Task Breakdown

↓

Executor Agent

↓

API / DB Calls

↓

Reviewer Agent

Used in:

- DevOps automation

- Compliance audit

- Infrastructure provisioning

Enterprise Use Cases of Agentic AI

Agentic AI Architecture is used in:

- Automated ITSM ticket resolution

- Code migration tools

- Document compliance validation

- Customer onboarding automation

- Travel planning systems

- Fraud detection analysis

Popular LLM Models Used in Enterprise

| Model | Provider | Capability |

|---|---|---|

| GPT-4o | OpenAI | Balanced reasoning |

| Claude | Anthropic | Long context |

| Gemini | Multimodal | |

| Llama | Meta | Open source |

| Mistral | Mistral AI | Lightweight |

Pricing References:

OpenAI → https://platform.openai.com/pricing

Anthropic → https://www.anthropic.com/pricing

Google → https://ai.google.dev/pricing

AWS Bedrock → https://aws.amazon.com/bedrock/pricing

How This Helps in AI Engineer / Architect Interviews

Interviewers often expect candidates to explain:

- RAG Architecture

- Embeddings

- Agent Workflow

- LangChain vs LangGraph

- Multi Agent AI Systems

You can explain Agentic AI like:

Planner Agent → Creates Plan

Executor Agent → Calls Tools

Reviewer Agent → Validates Output

Supervisor Agent → Final Response

Conclusion

Enterprise AI systems are evolving from:

Rule Based Automation

→ ML Prediction

→ Generative AI

→ Agentic AI Architecture

Future AI Architects will not just write prompts —

they will design autonomous AI workflow systems.

Frequently Asked Questions (FAQs)

What is the difference between Generative AI and Agentic AI?

Generative AI focuses on generating content (text, code, summaries) using Large Language Models.

Agentic AI goes a step further. It can:

Plan tasks

Decide actions

Call tools or APIs

Execute multi-step workflows

Review outputs

In short:

Generative AI → Creates responses

Agentic AI → Takes decisions and performs actions

What is RAG Architecture and why is it important?

RAG (Retrieval Augmented Generation) improves LLM responses by injecting enterprise-specific data during inference.

RAG is important because:

– It reduces hallucination

– Keeps data up to date

– Enables enterprise AI systems

– Avoids expensive fine-tuning

RAG is the most common production AI architecture today.

How do embeddings work in AI systems?

Embeddings convert text into numerical vectors that represent semantic meaning.

Example:

"Payment failed"

→ [0.23, -0.67, 0.98, ...]

Similar sentences generate vectors close in space.

Embeddings enable:

Semantic search

Context retrieval

Clustering

Recommendation

In enterprise RAG systems, embeddings power the vector database.

When should we use RAG instead of fine-tuning?

Use RAG when:

– Data changes frequently

– You need real-time enterprise knowledge

– You want lower cost

– You need traceable document references

Use fine-tuning when:

– You need behavior adaptation

– Domain-specific tone or format

– Structured output optimization

Most enterprises start with RAG before considering fine-tuning.

What is the difference between LangChain and LangGraph?

LangChain is used to build LLM-based applications with:

– Prompt templates

– Tool calling

– Memory

– RAG pipelines

LangGraph is used for:

– Multi-step reasoning

– Stateful agents

– Complex workflows

– Branching logic

What is the Planner–Executor pattern in Agentic AI?

The Planner–Executor pattern separates reasoning from execution.

User Input

↓

Planner Agent → Breaks into tasks

↓

Executor Agent → Calls tools/APIs

↓

Reviewer Agent → Validates result

This improves:

– Reliability

– Control

– Transparency

– Enterprise adoption

It is commonly asked in AI system design interviews.

What is Supervisor Agent Architecture?

In Supervisor architecture, a central agent coordinates multiple worker agents.

User

↓

Supervisor Agent

↓

Worker Agents

↓

Supervisor Aggregates Response

Used in:

– Multi-agent collaboration

– Research automation

– Enterprise workflow orchestration

This pattern enables scalable Agentic AI systems.

What are Multi-Agent AI Systems?

Multi-agent systems consist of specialized AI agents working collaboratively.

Example:

– Research Agent

– Pricing Agent

– Review Agent

– Planner Agent

Each agent has:

– Role

– Tools

– Goal

– Memory

Frameworks like CrewAI enable such architecture.

What are the main challenges in building Agentic AI systems?

Key challenges include:

– Hallucination control

– Tool misuse

– Latency management

– Cost optimization

– Observability and logging

– Workflow debugging

Enterprise systems must also ensure:

– Security

– Data privacy

– Compliance

– Auditability

This is where structured workflows (LangGraph) become important.