AI Friends — Building a Cost-Efficient Powerful Multi-Agent Generative AI System from Scratch

It all started on a lazy Sunday early morning sitting on a couch and reading messages from friends on WhatsApp. As no one was online at this early hours so I was missing immediate response. This resulted in the idea of having a chat group with AI friends who can talk to me anytime. So thought of creating the app out of fun.

Generative AI is transforming how we design digital experiences. But while most applications stop at simple chatbot implementations, the real innovation lies in multi-agent conversational AI systems — where multiple AI personas interact dynamically, debate, collaborate, and evolve within a structured architecture.

In this article, I share how I built AI Friends, a modular and cost-efficient generative AI application that simulates a real group chat experience using Large Language Models (LLMs). This project demonstrates how thoughtful AI chatbot architecture, structured orchestration, and cost optimization strategies can create powerful conversational systems — even at Proof of Concept (POC) stage.

What Is AI Friends?

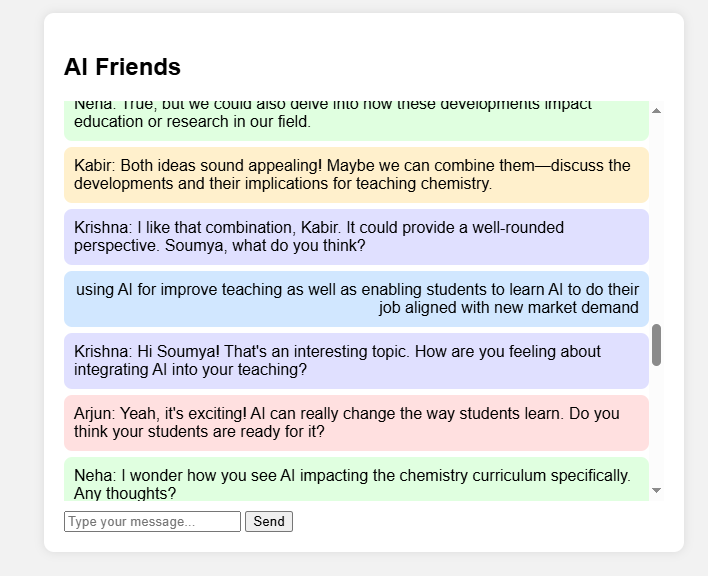

AI Friends is a multi-agent conversational AI application where a human interacts with three AI personas inside a group chat simulation.

Unlike traditional chatbot systems where:

Human → AI → Human → AI

This system models:

Human ↔ AI Friend 1 ↔ AI Friend 2 ↔ AI Friend 3

Each AI friend:

- Has a distinct personality

- Aligns with the user’s age and interests

- Responds with humor and contextual awareness

- Interacts with other AI agents — not just the human

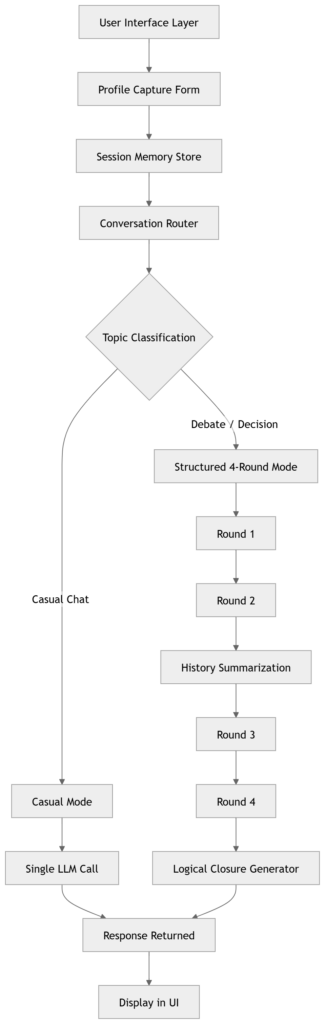

The system dynamically switches between:

- Casual conversational mode

- Structured debate mode (when the topic demands reasoning or decision-making)

The conversation unfolds over four structured rounds, ensuring a natural beginning, middle, and closure — avoiding infinite or chaotic dialogue drift.

This is not just a chatbot. It is a carefully orchestrated generative AI application architecture.

Why Multi-Agent Conversational AI Matters

The future of conversational AI is not one assistant responding to commands. It is:

- AI agents collaborating

- Structured debates

- Context-aware persona modeling

- Controlled reasoning flows

Multi-agent conversational AI enables:

- Richer engagement

- More nuanced discussions

- Decision simulations

- Role-based reasoning

These capabilities are highly relevant for:

- Education

- Corporate brainstorming

- Strategic planning simulations

- Behavioral experimentation

If you’re new to agent-based AI design, I recommend exploring my article on First step to transition into AI Roles to build context before diving deeper.

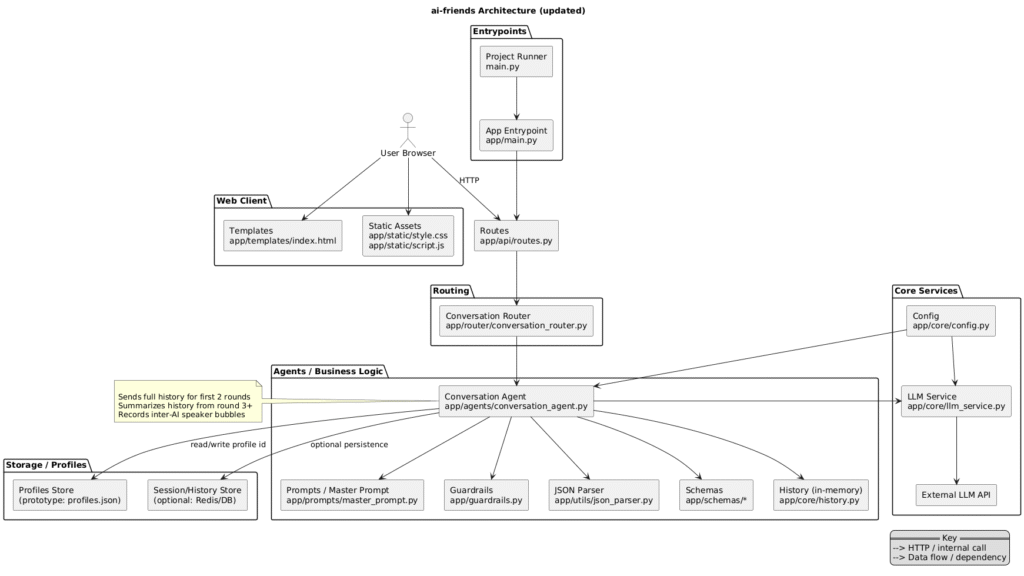

Architecture of the AI Chatbot System

One of my core goals was to build a clean, modular, and scalable AI chatbot architecture.

Instead of relying on heavy abstraction frameworks immediately, I designed a layered system that keeps responsibilities clearly separated.

Architecture Diagram

This visual representation helps readers understand the flow clearly.

UI Layer (FastAPI + Jinja2)

The frontend captures:

- Name

- Age

- Location

- Profession

- Interests

The chat interface:

- Displays separate message bubbles

- Shows AI friends individually

- Maintains conversational clarity

The UI is intentionally simple because architecture matters more than visual complexity at POC stage.

Routing Layer

The routing layer handles:

- Session state

- Round tracking

- Conversation mode switching

- Triggering summarization logic

This ensures:

- Business logic does not leak into UI

- Prompt logic does not leak into routing

This follows classic separation of concerns, a key principle in scalable AI system architecture.

Agent Orchestration Layer

This is the core intelligence coordinator.

A key architectural decision:

Generate all AI friend responses in a single LLM call.

Why?

Because multiple calls:

- Increase token usage

- Increase latency

- Increase cost

Instead, a structured master prompt instructs the model to produce:

- Three differentiated AI persona responses

- Inter-agent interaction

- Humor

- Logical flow

- Mode switching when necessary

This is an example of cost-efficient multi-agent AI system design.

Prompt Layer: Embedded Intelligence

The master prompt contains:

- Persona instructions

- Humor guidelines

- Structured debate logic

- Guardrails and safety constraints

- Round-specific behavior rules

- Logical closure instruction for round 4

Rather than scattering logic across chains, tools, and nodes, this centralized approach keeps the system:

- Transparent

- Debbugable

- Predictable

For readers interested in prompt engineering fundamentals, see OpenAI’s official guidance:

https://platform.openai.com/docs/guides/prompt-engineering

Summarization Layer

One of the biggest hidden risks in LLM systems is token explosion.

To implement strong AI cost optimization, the system uses:

- Full conversation history in rounds 1 and 2

- Automatic summarization after round 2

- Only summary passed in rounds 3 and 4

This dramatically reduces token growth while maintaining context.

For corporate leaders evaluating generative AI scalability — this is critical. Cost control must be embedded at architecture level, not retrofitted later.

AI Cost Optimization Strategy

AI applications can quickly become financially unsustainable without architectural discipline.

This system optimizes cost using:

- Single LLM call per round

- Structured 4-round lifecycle

- Automatic summarization

- No redundant inter-agent calls

- Controlled temperature settings

This approach aligns with best practices in token cost control in AI applications and scalable chatbot systems.

Why Not Use LangChain or LangGraph?

This is a common question.

Frameworks like LangChain and LangGraph are powerful for:

- Tool chaining

- External API integration

- Complex decision graphs

- Multi-step reasoning workflows

However, for this multi-agent Proof of Concept, they were intentionally not used.

Reasons:

- No external tool orchestration required

- No retrieval-augmented generation needed

- No asynchronous graph branching required

- Direct orchestration was sufficient

Using a heavy framework prematurely can:

- Increase abstraction complexity

- Make debugging harder

- Reduce transparency

- Add overhead without benefit

Architectural foresight means introducing frameworks when complexity demands them — not before.

However, this system is designed to easily evolve into:

- LangGraph-based decision graphs

- Tool-augmented agents

- Memory-enabled systems

- RAG architectures

That is intentional extensibility.

Upgrade Possibilities

This architecture is intentionally modular to support future upgrades.

Persistent Memory

Add database storage for:

- Long-term persona adaptation

- Cross-session continuity

Retrieval-Augmented Generation (RAG) or Web Search

Integrate:

- Vector databases

- Knowledge retrieval

- Domain enrichment

- Tools to search web for recent information

If you’re unfamiliar with RAG, explore Google’s research overview on retrieval-based generation:

https://research.google/pubs/

LangGraph Decision Trees

When debates require:

- Voting

- Decision scoring

- Conflict resolution workflows

LangGraph becomes valuable to orchestrate a decision drive multi-agent architecture.

Real-Time Streaming

Enable:

- Token streaming

- Live typing effects

- Improved UX responsiveness

Specialized Agents

Each AI friend could:

- Use different temperature settings

- Have domain specialization

- Access different tools

The current modular design supports all these evolutions.

Development Timeline

🕒 Development time (POC): ~6 hours (using GitHub Copilot)

🛠 Fine-tuning, behavioral refinement, and architectural improvements: ~3 additional hours

This demonstrates:

- Rapid prototyping capability

- Strategic architectural planning

- Iterative system refinement

Copilot accelerated coding.

Architecture required intentional thinking.

Why This Matters for Corporate Leaders

For enterprise leaders evaluating AI initiatives:

- Architecture determines scalability.

- Cost optimization determines sustainability.

- Modularity determines extensibility.

- Guardrails determine safety.

This project demonstrates how a clean POC can already incorporate:

- Structured decision logic

- Persona-based design

- Safety embedding

- Cost discipline

- Extensibility pathways

- Guardrails to prevent discussions taking wrong course

- afety embedding

- Cost discipline

- Extensibility pathways

Conversation Flow Diagram

The Bigger Vision

The future of generative AI systems lies in:

- Multi-agent collaboration

- Structured reasoning

- Cost-efficient orchestration

- Modular design

- Framework-agnostic foundations

AI Friends is not just a demo — it is a demonstration of architectural foresight.

It shows how:

- Generative AI applications can be structured intentionally

- Multi-agent conversational AI can be cost-efficient

- POCs can be built cleanly without overengineering

- Systems can evolve gracefully toward enterprise readiness

Final Thoughts

Generative AI is powerful. But powerful systems require thoughtful design.

AI Friends demonstrates that:

- Multi-agent conversational AI can be built efficiently.

- Architecture matters more than hype.

- Cost control is a design decision.

- Frameworks should be introduced intentionally.

- POCs can still reflect enterprise-grade thinking.

And if you’re curious, explore the code here:

🔗 GitHub Repository: https://github.com/sourav-learning/ai-friends

The future belongs to those who design before they deploy.